Disclaimer: All opinions expressed here are solely my own and do not represent those of my employer.

My jaw is ajar, like the front door of a house that's just been broken into. A fifth coffee has failed to coax my brain into action. It uses the caffeine kick to muster its remaining energy and waves the proverbial white flag. All I see are sinuous, undulating curves punctuated by open spaces, and lines of all orientations.

Like Cameron in Ferris Bueller's Day Off staring into a painting at the museum, I stare at the paper on my desk trying to make sense of it all. What does this all mean? In the movie, Cameron tries to tease out meaning in his life, while being completely lost inside the beautiful splashes of color in the painting.

Unlike his, my pursuit is a little less deep but denser.

I've been trying to read a machine learning (ML) paper for several hours. Like a rusty old Ford Fiesta, I keep sputtering and stopping at each speed bump.

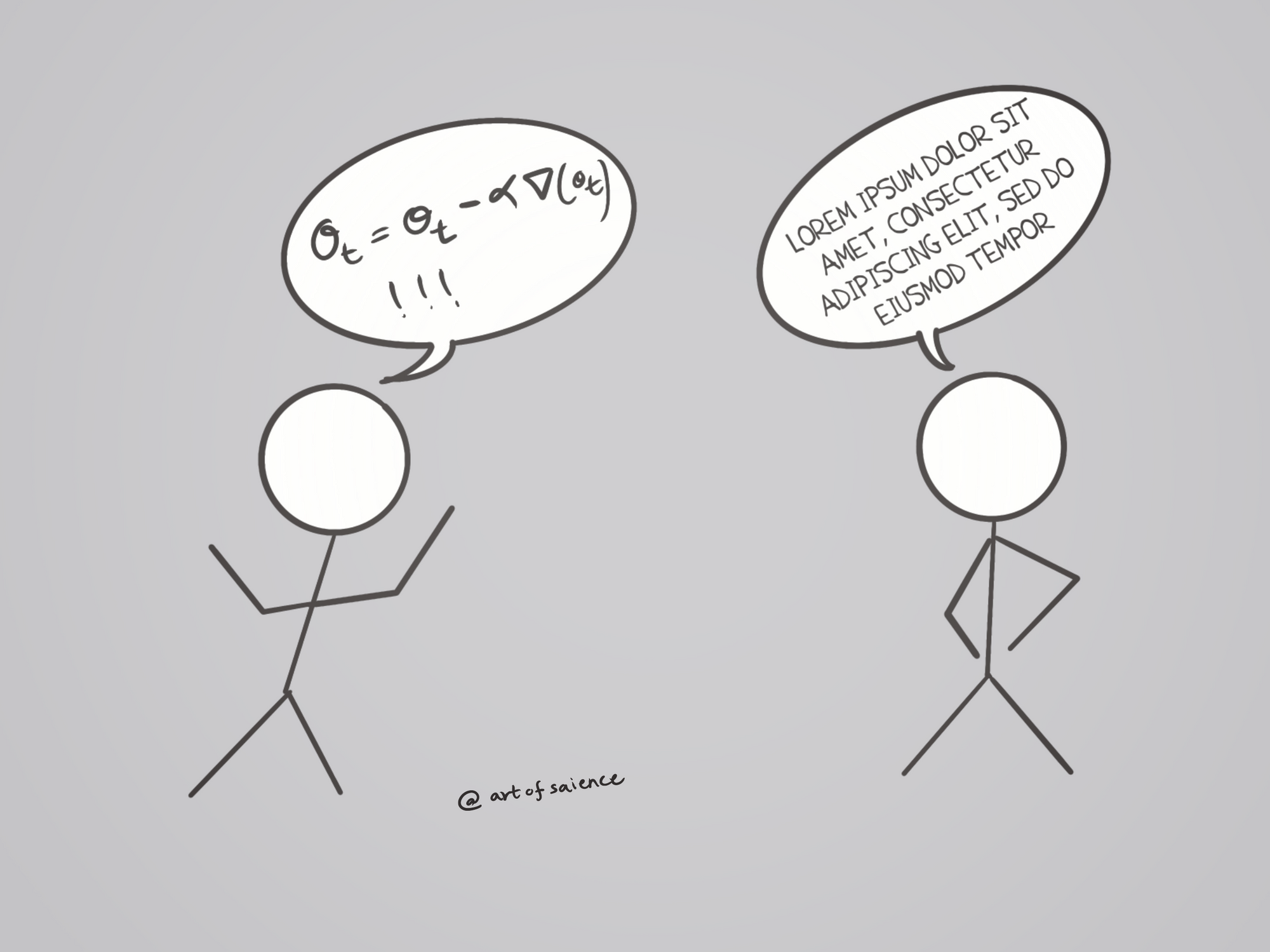

Squigglies smirk at me from the page, sticking their inky black tongues out. Yet another paper riddled with unnecessarily complicated equations. Some of these equations look like they've been copied from ancient scrolls that unlock the purpose of life (it isn't 42) or offer a solution to male pattern baldness.

Sadly, they reveal nothing of the former and accelerate the latter.

Finally, out of frustration, I ignored all the equations in this paper and read it again. There in all its glory was a simple but beautiful idea I could see clearly. Why then this pretense of complexity and sophistication?

ML feels hard enough, let alone having these monstrosities thrust into your visual cortex. This is one of the many impediments practitioners face when entering this mysterious realm.

A virtuous cycle of sharing insights simply and clearly, improving them, and resharing successfully navigated our evolution from the stone age to the age of machines.

So how did we end up with these? Not the kinds of equations that clarify, but these illegible, irritating knockoffs that muddy the pristine waters of research that quench our intellectual curiosity?

An Eulerian walk through history

Did the great minds of yore speak in symbols while discussing fascinating ideas? What would that even look like?

To find out, I pursued this quest down a ravine, then fell into a gorge, drove through a Starbucks, and finally spiraled into a rabbit hole which led me to the renowned mathematician, Leonhard Euler. In addition to his groundbreaking work in mathematics, Euler is also credited with introducing several notational conventions through the wide circulation of his many textbooks.

I can imagine they sold faster than Vogue and Us Weekly given how prevalent these notations, and their mischievous offspring, the squigglies have become.

But Mr. Euler had nobler intentions than just becoming a social media influencer and writing Twitter threads about his solopreneur revenue. First, he wanted a succinct way to represent common variables and constants. Thus, he popularized the use of f(x) to denote a function of x, e for the natural logarithm, now called Euler's number (Euler could share his digits before it was cool), and the Greek letter 𝚺 for summations among other things.

Why Greek letters? Ancient Greeks had several theoretical contributions to science and wrote in their alphabet. This was probably a nod to recognizing their amazing work.

The second objective of standardizing notations was to promote brevity while preserving clarity. Why?

Here is a picture of Richard Feynman's thesis. Do you realize how long it would have taken him to graduate if he had to handwrite these equations without concise mathematical symbols to help him?

So, where were we? Aah yes, we know how Euler's notations spread, the big question then is how were his proposals so widely accepted.

In the older times, Math and Science were taught with Greek and Latin as the languages of choice.

Thus, these equations were concise ways to explain complex ideas in a language that was universally understood.

We no longer live in that world of universal understanding. Times have changed, as have the languages through which we communicate.

Squiggly Lovers who wear Prada and relish Ratatouille

One could argue that these notations are still adequate to explain ideas even today, provided that the reader is educated in this language of symbolic notations. Researchers could continue to use these elegant notations and the world continues to progress as it has for millennia.

That would be a perfectly valid claim, provided that the intention of the researcher was to illuminate kindred spirits. Unfortunately, for some researchers, using equations in their papers is a facade for complexity. These people add complexity with the sole aim of getting their research published.

Simple and beautiful scientific hypothesis, once appearing like snow white morphs into the ugly witch who makes her eat the poisoned apple. Thus, squigglies are born.

They aren't all to blame, however. This behavior stems partly due to the unreasonable standards of entry to prestigious conferences and journals. It is worsened by the sheer volume of papers submitted to these conferences.

Submitting your research to conferences and journals typically goes as follows. One squeezes oneself within an inch of one's life to produce a beautiful nugget of wisdom. One then eloquently explains this nugget of wisdom through the written media and supports it through illustrations and experimental validation. One then prays to the universal absolute. Finally, one submits the paper thus written to a committee of reviewers who guard entry to these venues. If these reviewers deem the paper worthy, one's work is published, one gets recognized as a credible voice in the field, and then one goes back to the drawing board to restart the process to sustain this acquired credibility.

If you've attempted to submit your research to an ML conference, you'd have come across an entity we call Reviewer #2 in the machine learning community.

Reviewer #2 is the secret love child of Miranda Priestly and Anton Ego.

Nothing satisfies their lofty standards. Nothing. They single-handedly shatter hopes and dreams with the precision of a surgical laser and the ruthlessness of a cheap tabloid journalist. Empirical work needs a theoretical basis and theoretical work needs more empirical validations completely unrelated to the theory being proposed.

One can handle repeated rejections only for so long.

Thus, simple ideas need to be repackaged as complex ones to get in the door. As readers, we are forced to see squiggly trees instead of the magnificent forests they once represented.

Deer in the headlights

New practitioners enter the field pre-intimidated. Some are terrified to read papers. After all, these papers make them feel stupid. They can't understand what's going on and walk away. That's really sad.

What's worse is that there are a lot of papers that are beautiful, and have clear explanations and elegant equations that put the most beautiful haiku to shame. These get a bad rap from less experienced readers because they are notation-heavy and thus miscategorized as squigglified papers. Want an example?

Before you start hearing the background music of the Psycho shower scene in your head, let me tell you what this equation represents.

This is one of the core building blocks of neural networks that generates all the fancy art you've been seeing online. Here is what it represents in plain English:

Take an image x and add some noise to it. Then take that result and add some more noise to it. Repeat this process T times. See? Not so scary is it?

The universal language of today

Fast forward to today - We're no longer taught classical education - We've gone from understanding science in Greek and Latin to "It's all Greek and Latin to me". Oh, the irony.

What can we do about this?

Most if not all entering the ML field today have some experience with programming. Instead of speaking in squigglies, let's try to speak in code. An aspiring practitioner's eyes will light up when they're greeted by code instead of convoluted equations.

Just like Ray Barone tasting Debra's surprisingly good Braciole, they'll be much more receptive to reading papers and understanding the equations contained within them.

Research is for everyone. It should inspire, not intimidate.

We should always get our idea through to others in the simplest way possible. Yes, we may need to use equations to write our papers, Yes, we will face the wrath of Reviewer #2. But, consider sharing a code repository, a blog post, or a video that explains things in plain English so that others can better understand the idea.

Speak in the tongue of the world, and she shall reward you with name and fame.

Now that that's clear, I have a conference deadline coming up and I have to figure out how I can make this paper fancy. What squigglies equations should I use?